(2.1)

(2.1)

This is my Master of Science Thesis for the Electronics and Telecommunications Engineering Department of the Electrical and Electronics Engineering Faculty of the Istanbul Technical University, Istanbul, Turkey. This version is slightly different from the original thesis, it has a nicer layout, and a modified preface. I am also working on an online version of this thesis which will hopefully be available at the URL:

http://turquoise.wpi.edu/aries

I would like to thank especially to Yusuf Leblebici, who has been my much travelling advisor. The Heisenberg Principle of Uncertainity definitely applies to him: If you know where he is, you don't know what he is doing, and if you know what he is doing you don't know where he is.

A big special thank you to my family, who have always been with me and supported me and to Öden Hotin who has treated me like his own child and protected me in Switzerland.

I would also like to thank my professors at the ITU (in no particular order): Erdal Panayýrcý, for helping me with my average :-); Duran Leblebici, it is called HAL - not HALL; Uđur Çilingirođlu, for talking me into staying at ITU; Osman Palamutçuođullarý, for keeping me interested in electronics; Bülent Örencik, for being an extremely nice person; Ergül Akçakaya, for the trust he put in me; Hakan Özdemir, you still owe me 10 Guiness.

I also want to mention my co-workers at the ITU: Bilgin, is that an Amiga ?; MVT, what do you mean by saying I can complete the missing parts ?; Bülent, I can't be ugly; Asým, Nafý yani?; Elif, This is Çomar, my cat; Murat, I need someone who understands from computers, to help me carry this PC; Ece, Let us go to the mining faculty for breakfast; Cem, the speedy sports reporter; Selçuk, Dear admin I have a question; Hakan, let us drink and be beautiful, Barýţ, Foton at your service, Bilge, do you call that a layout, and Müţtak, from 10am Sunday to 1am Thursday, is that the faculty record ?

I'd like to thank to Daniel Mlynek, for accepting me as a refugee and providing a splendid working environment, all the friends at C3i-DE-EPFL: Alex, Alain, Paul, Theo, Francesco, Christophe, Laurent, Seong, Marc, Martin, Sophie, Frederic, Stephanie, Sylvain and Giorgio.

And last but not least to all my friends, for making my life so special: Erhan, Özlem, Orhan, Nezaket, Esra, Harun, Tandođan, Turgut, Ţima, Tuna, Nil, Berna, Torrence and the Dutch Gang, the Turkish Linux Users Group and all the rest that I forgot to mention.

Thank You !

January 1998

Frank Kagan Gürkaynak

![[TOC]](PIC/toc.gif)

Digital Signal Processing has evolved into one of the main applications of current VLSI technology. The recent developments within the electronics industry has resulted in new applications which require extensive signal processing. These applications have entered the consumer market that has placed a high demand especially on multimedia applications.

Most of the digital signal processing operations can be expressed by means of convolutions where the image data value and a set of neighbouring data values are convolved by a set of coefficients. This operation is especially computation intensive as it involves a number of multiplications. For high bandwidth signals such as images, these operations have to performed repedeatly over a large set data continously. As a result, the general nature of this operation requires a number of area consuming high speed multipliers.

In this work, a basic building block for the construction of convolutional filtering blocks is presented. This block (named Aries) uses pre-calculated values stored in a RAM to compute the result of a 5x1 kernel. These blocks can be combined to generate a filter of any dimensions 1-D or 2-D). Aries includes an output stage that can be used to generate a pipelined adder array to sum up the results of all Aries blocks. The block operates on 8-bit unsigned data at a rate of 50 million samples per second. This corresponds to an equivalent performance of 450 MOPS (Million Operations per second). A 9x9 filter realization would only need 18 Aries blocks, have an equivalent performance of more than 8 GOPS (Giga Operations Per Second) and occupy a silicon core area of less than 30 mm^2 even in a modest 0.8um CMOS technology. The Full-Custom design methodology employed throughout the design is presented as well.

![[TOC]](PIC/toc.gif)

Digital Signal Processing (DSP) technology is one of the more recent developments of the fast evolving electronics technology and yet it is increasingly used in daily life. Celullar telephones, modems, CD-ROM devices, audio equipment, hard disks and automobile electronics are examples of the broad spectrum of the broad application spectrum of DSP devices [1]. The first programmable DSP processors appeared in the early 80's and were intended as audio processors. Within ten years the processing power of custom digitial signal processing hardware has became capable of processing video rate signals. The development of multimedia applications and standarts led to another development where recently general purpose processors started including specialized hardware to acquire basic signal processing capability.

Even the most simple DSP algorithms require extensive computation and DSP operations in general are still considered to be among the most demanding operations in integrated circuit technology. A typical DSP operation consists of a series of multiplications on stored values of input data with a set of co-efficients, producing results which are then accumulated. As the signal has to be processed continously, the bandwidth of the signal puts strict constraints on the speed of operation. As an example, for video applications 10 to 75 pictures need to be processed each second. Depending on the resolution each picture may have as many as two million pixels, where each pixel is represented by (at least) 8 bits. Any video processing algorithm will therefore need to calculate the result of the operation on the order of tens of nanoseconds.

High performance digital signal processing architectures rely on complicated designs, that are usually tailored for a particular application. Moreover, these designs are rather large and have transistor counts on the order of millions of transistors. These designs are usually parts of a custom chip-set for a specific application such as MPEG decoding. It is extremely hard to adapt these designs for new requirements as most of them have application oriented optimizations.

In this work, a programmable, fully pipelined compact LSI macro block (Aries) is presented, that can be used to design 1-D or 2-D convolutional filters of any size with a clock cycle of 20 ns. The design is extremely compact and occupies an active silicon area of less than 1.5mm^2 in a conventional 0.8um digital CMOS technology. Several Aries macro-blocks can therefore easily be embedded in a larger design. The fully pipelined 20 ns-cycle operation time is sufficient for high resolution image processing. Aries is fully programmable (at a lower speed than the data input rate), which gives it an adaptive filtering capability. Aries also includes the hardware necessary to accumulate the results of several Aries chips and can easily be scaled to accommodate digital filters of any dimensions.

There are three basic alternatives for the construction of digital filters [2]. The commonly used two alternatives employ multipliers. In Aries there are no multipliers, the data is broken down into its bitplanes. The data-bits of the same order are grouped to form a RAM address, and a pre-computed partial result is read from a RAM. The partial results corresponding to these data-bits are then weighted and accumulated in an adder array to form a result.

In Chapter 2, a brief introduction to Digital Signal Processing is given,with an emphasis on the binary number systems used in digital systems. Aries is developed from earlier work on Taurus, A Capacitive Threshold Logic based image filter [3]. Chapter 3 shortly describes the basic architecture of Taurus and discusses the main problems encountered during design. The design of Aries is explained in detail in Chapters 4 (general structure), Chapter 5 (RAM design), Chapter 6 (adder design) and Chapter 7 (Implementation). As a speed and area optimized design, Aries has been designed using a full-custom design methodology. Some aspects of this design methodology are explained using the design of one of the main blocks of Aries in Chapter 8. Finally, Chapter 9 summarizes all the results.

![[TOC]](PIC/toc.gif)

Throughout the entire science history man has tried to understand, analyze, imitate and most of all, control its surrounding environment. The limits of human capabilities were the dominant factorsfor most of the technical inventions. They felt cold, understood that fire would provide the necessary heat and then they went on to generate fire and control it. There are many key inventions that has had a key role in the history of civilization: the wheel, the invention of writing, bronze, gunpowder, the steam engine and integrated circuits have all changed the way of life dramatically. Today, thanks to the extended usage of integrated circuits, we are said to be living in an information age.

A very important breakthrough in information processing came with the introduction of integrated digital circuits. Digital circuits, unlike their analog counterparts, interpret their inputs as one of two logic states: logic "1" and logic "0". The output of any digital circuit comprises of a set of these states. These two states are enough to define a binary number system where any mathematical operation can be realized, which makes digital circuits ideal candidates for computational operations. Furthermore digital information is much more noise immune than analog signals and there are practically lossless methods to store digital states. There is only one slight problem:

Every piece of natural information is strictly analog in nature: they consist of continuous signals. Fortunately it is quite easy to express any analog value in terms of a number of digital states. Analog to Digital Converter (ADC) circuits are used to make this conversion. The digital circuits can then process, store and manipulate these analog signals. Once a result is available Digital to Analog Converter (DAC) circuits can convert the digital value into an analog signal.

Digital Signal Processing, is a general term that describes specialized algorithms for signal processing using digital circuits. These signals can range from low frequency audio signals to high resolution image image signals. Most DSP applications can be described as various filtering algorithms to enhance the original signal. Applications include:

Especially new multimedia applications and standarts (eg. MPEG2, MPEG4) require computation-intensive transformations to reduce the bandwidth and to enhance the quality of audio and video signals.

![[TOC]](PIC/toc.gif)

The basic digital unit is called a bit. One bit can only have one of the two values: logic "0" or logic "1" (commonly referred to as 0 and 1). It is evident that one bit alone can not hold much information. More bits can be joined to form a number that can express larger numbers. With a total of n bits 2^n different numbers can be expressed. Yet this alone does not describe how a number can be represented in digital form. There are several number systems with different capabilities and short-comings

![[TOC]](PIC/toc.gif)

The binary number systems are the most widely used number systems because of their simplicity. The basic binary number system is much similar to the decimal system we use. An n-bit number in the binary number system is an ordered sequence of bits:

(2.1)

(2.1)

This number exactly represents an integer or fixed-point number. If we consider only natural numbers, a direct representation would be possible. This is called an unsigned binary number representation. For a set of a(i) the corresponding value can be calculated as:

(2.2)

(2.2)

Numbers from 0 to 2^n-1 can easily be represented this way. If negative numbers are involved, a different approach needs to be taken. A solution analogous to the solution used in a decimal number system would be to use a digit to represent the sign. This representation, where the Most Significant Bit (MSB) of the sequence represents the sign of the number, is called the Sign-Magnitude Binary Number system. The value of A can be determined by:

(2.3)

(2.3)

Although this format is relatively easy to comprehend, it has some important disadvantages. The number 0 can be represented in two different ways (one with a positive and one with a negative sign). A more significant problem is that positive numbers and negative numbers have to be treated differently in arithmetic operations. This is a major problem for operations that involve more than two operands, as the number of possibilities for the signs increase dramatically with the increasing number of operands.

The Two's Complement representation of the binary number system is the more frequently preferred alternative to represent both positive and negative numbers. The value of A of a two's complement number can be calculated by the following method:

(2.4)

(2.4)

The negative value of any number with this representation can be calculated as:

(2.5)

(2.5)

The most interesting feature of this representation is that for most of the basic arithmetic operations, digital computation blocks (with slight limitations) will perform correctly independent of the sign of the number. Table 2.1 gives all the combinations of a 4-bit number and lists their respective values for the three representations.

Table 2.1:Values of a 4-bit number according to its representation.

Bit sequence Unsigned Sign-Magnitude Two's Complement

0000 0 +0 0

0001 1 1 1

0010 2 2 2

0011 3 3 3

0100 4 4 4

0101 5 5 5

0110 6 6 6

0111 7 7 7

1000 8 -0 -8

1001 9 -1 -7

1010 10 -2 -6

1011 11 -3 -5

1100 12 -4 -4

1101 13 -5 -3

1110 14 -6 -2

1111 15 -7 -1

![[TOC]](PIC/toc.gif)

The most important disadvantage of the binary number representation is that for some small change in value, a large number of bits within the number may change. As an example for a 8 bit number, consider the representation of 63 (0011 1111) and 64 (0100 0000) (notice that this example is independent of the number representation). Although the distance between these two numbers is only one LSB, 7 out of 8 digits have changed their values.

The Gray number system is a non-monotonic representation where two consecutive numbers differ in only one of their digits. For some applications such as counters or low-power address bus decoders the Gray number system can help reduce the power consumption as for consecutive numbers there will be fewer transitions (and associated power consumption).

The main disadvantage of this number representation is that it is not well suited for arithmetic operations mainly because Gray numbers are non-monotonic. The lower power consumption issue is also arguable, as the extra power used for the generation of Gray numbers may decrease the aforementioned power savings considerably [4].

![[TOC]](PIC/toc.gif)

Addition is by far the most important elementary operation with in digital systems. The main difficulty with the addition lies in the carry problem (also referred as the curse of carry) [1]. The result of an operation on the bits of same magnitude can affects the result of higher order bits and is likewise affected by the result of lower order bits.

The redundant number systems are a result of this problem. The main idea is to represent one digit with more than one bits (hence the name redundant) to avoid carry propagation in adders. As a result of redundant bits, a number represented in a redundant number system has higher storage requirements than that of a number represented in a non-redundant form. A second disadvantage is that the conversion from a redundant number system to non-redundant number system is quite complicated.

![[TOC]](PIC/toc.gif)

All the number systems discussed so far are so-called fixed point number systems. The floating point representation consists of a sign bit (S), a mantissa (M) and an exponent (E) in the form of:

(2.6)

(2.6)

Table 2.2 shows two IEEE standarts for floating point representations.

Table 2.2 IEEE Floating point standart.

Standart n M bits E bits Range Precision single 32 23 8 3.8E+38 1E-7 double 64 52 11 9.0E+307 1E-15

Floating point operations require different operations for mantissa and exponent parts of the number. General purpose processors which are used for scientific computing make use of floating point arithmetics by means of dedicated Floating Point Units (FPU) to speed up computation intensive applications. Not more than five years ago these FPU's were usually auxiliary processors. Today all of the modern general purpose processors have embedded FPU blocks.

There are other number representations such as: Residue Number System, Logarithmic Number System and Anti-Tetrational Number System [5] whose details are beyond the scope of this text.

![[TOC]](PIC/toc.gif)

Once the data is represented in digital form, it can be processed with a large number of algorithms. Any specialized hardware that performs signal processing on digitally represented data can be called Digital Signal Processing Hardware (DSP hardware). Few different families of DSP Hardware can be identified [1]:

DSP processors:

These chips are essentially RISC architecture processors

with specialized hardware for DSP algorithms. They can be programmed

to perform a wide range of algorithms.

DSP cores:

These blocks are large macro-blocks with the functionality

of a DSP processor that can be embedded in ASIC's. These cores would

include a more specialized hardware for the algorithm.

Application Specific DSP Chips:

These chips are designed for a specific

algorithm and are used where programmable approaches can not achieve

the required performance.

The nature of DSP algorithms have different requirements than generic computational algorithms. Multipliers and adders are indispensable blocks of any DSP algorithm. DSP algorithms operate on a fixed rate of data, putting strict restrictions on the speed of individual operations.

Another interesting aspect of DSP algorithms is that it frequently relies on the current as well as previous values of data. The only way to realize this is to use storage elements (registers or RAM). RAM access is a slow and complicated process and large memory arrays are the least wanted blocks in any high speed design. As an example, a (512 x 512), 8-bit grayscale image needs a storage of approximately 2 million bits which, in turn, requires a considerable RAM capacity. No DSP algorithm can afford to have simultaneous access to all pixels of this image, it can at most concentrate on one part of the image.

The main problem however, lies in the I/O bandwidth. The source that will provide the data to the DSP block and the receiving end that will receive the processed data, typically have limitations on the available bandwidth.

The introduction of DSP specific hardware dates back to the early 80's. First programmable DSP processors were mainly used for audio-band applications. Especially the Texas Instruments 320c series of general purpose DSP processor was widely used for a wide range of applications. Strangely enough, contrary to the parallel developments in general purpose microprocessors or memory chips, the DSP specific hardware did not have a continuously expanding usage within the 80's. It took almost 10 years until the developments in electronics industry enabled DSP hardware to be developed that were fast enough for image processing. Image filtering, compression and coding are the three main fields for which a number of dedicated chipsets have been produced.

![[TOC]](PIC/toc.gif)

The last decade has seen an enormous increase in the capabilities and application areas of general purpose microprocessors. As a result of these developments, the average home computer user nowadays has a typical computation power which is at the same level of that of leading research institutes not much more than ten years ago. There are a few key reasons for this accelerated development [6]:

As a result, we are continuously offered an increasing number of extremely powerful general purpose processors which enable many sophisticated and computationally complex problems to be solved by software running on these processors. The main advantages of these processors are obvious:

At the beginning of 1980's when the first DSP chips were introduced to the market nobody would have believed that general purpose processors could outperform specialized Signal Processing Processors. The initial boom of DSP chips faded silently, and especially after the introduction of MMX extensions to the majority of general purpose processors (which essentially adds a number of specialized instructions for DSP), it is doubtful whether or not they can reconquer the market. While the future of DSP processors is not very bright, specialized DSP chips, and macro-blocks will continue to be play an important role in the future.

![[TOC]](PIC/toc.gif)

In this section, we present the overall architecture of a dedicated chip, Taurus, which is a fully pipelined real-time programmable 3x3 image filter [3,7] based on Capacitive Threshold Logic (CTL) [8]. This chip has essentially been designed to demonstrate the capabilities and possible applications of a new circuit technique (CTL) in digital signal processing. This material is presented to serve as preliminary background for the Aries architecture which will be introduced in Chapter 4.

The extensive research on CTL has resulted in a wide range of building blocks like multipliers, parity generators and population counters. CTL blocks have the advantage of realizing high fan-in building blocks with a much lesser complexity than a CMOS counterparts. As most of these blocks have costly CMOS realizations, traditional designs often tend to avoid algorithms employing functions with high fan-in. Having developed these fast and compact blocks, research intensified on the realization of complex digital systems using CTL building blocks.

A number of applications and algorithms have been examined for their suitability to include CTL building blocks [8,9,10,11]. The time-domain two dimensional convolution (among others) proved to be suitable candidate for a CTL based application. The two dimensional convolution filter, depending on the window-or-kernel size of the filter, involves the multiplication of data values with their corresponding coefficients and the accumulation of the weighted sum of all the product terms. Thus an n x m filter needs n*m multiplications and n*m-1 additions with a total of 2*n*m-1 operations per result. These operations can be defined in mathematical notation as:

(3.1)

(3.1)

where x(p,q) is an element of the input set, y(p,q) is the filter result corresponding to the input and c(i,j) are the coefficients of the n x m window (kernel). The realization of an 3x3, (n=m=3) image filter was targeted as a demonstration of capabilities of the CTL blocks. Rewriting equation 3.1 for the given values of n and m we get

(3.2)

(3.2)

![[ZOOM]](PIC/mag.gif) Figure 3.1: 3 x 3 convolutional filtering.

Figure 3.1: 3 x 3 convolutional filtering.

A real time implementation of this filter will need to have access to three subsequent lines (rows of pixels) of the image at any time during its operation. Three new values from each line needs to be taken at each clock cycle (Figure 3.1), thus at any given time the hardware would have access to any of these pixel values and the 2 dimensional convolution function can also be simplified into a 1-D FIR algorithm as follows:

(3.3)

(3.3)

where the indexes for the 2 dimensional position of the input pixel can be calculated from the 1 dimensional variable i using modulo and remainder operations:

(3.4)

(3.4)

and

(3.5)

(3.5)

Having this conversion form i to (p,q) in mind, equation 3.3 can be written in a more simplified way such as

(3.6)

(3.6)

A traditional high-speed implementation of this algorithm requires 9 parallel multiplier circuits to compute each product term, and an adder to calculate y(m) which increases the silicon area significantly [1].

A totally different approach to this problem can replace all multipliers with population counters. For image processing applications one may assume that x(m) is a 8 bit unsigned integer corresponding to the intensity of a pixel in the input image (Although any other value might also have been used within the algorithm). Any x(m) can be written as

(3.7)

(3.7)

(3.8)

(3.8)

where b(m,j) element of {0,1} is the j.th bit of the m.th image pixel. Breaking down image pixels into bits leads to the definition of bitplanes which are 2 dimensional arrays that have the same dimensions as the image, but only consist of the j.th bit of the image pixel values (see Figure 3.2). Substituting this representation of a binary number in Equation 3.6 we get:

(3.9)

(3.9)

Using the associative property, we can further modify this equation into

(3.10)

(3.10)

![[ZOOM]](PIC/mag.gif) Figure 3.2:Bitplane representation of a binary coded image.

Figure 3.2:Bitplane representation of a binary coded image.

This re-arrangement is very important in understanding the operation principle of the Taurus architecture. The inner parenthesis which involves a multiplication is a special multiplication operation as one of the operands has only two possible values (b(m,j) element of {0,1}). Therefore, the result of the multiplication can be expressed as:

(3.11)

(3.11)

(3.12)

(3.12)

The inner sum therefore, is only a conditional sum of the coefficients. Taurus uses a CTL based counter to generate this sum. An input switch matrix provides a programmable switch for each of the coefficients. If b(m(i),j)=1, c(i) number of inputs of a counter are made active. The counter simply counts the number of logic "1"s on its input which is the sum of all coefficients which have a non-zero corresponding bit on the bitplane. Once this sum is generated for each bitplane of the image, the result is obtained by weighting and adding these sums. The j.th sum has a weight of 2^j, but this weighting is relatively easy to implement with by the means of a simple j bit shift left operation. In fact, the shifting operation can easily be implemented within the adder with no extra cost at all.

![[TOC]](PIC/toc.gif)

Threshold Logic (TL) emerged in early 1960's as a unified theory of logic gates, which includes conventional switching logic as a subset. The formal TL gate can perform not only and/or primitives but any linearly separable boolean function [12]. But despite the theoretically obvious merits, until recently TL has never had a significant impact in practice, due to the limited success achieved in developing a suitable TL gate on silicon.

The similarities between the functionality of a TL gate and the hard limiting neuron structures used in artificial neural networks, has led to the development of Capacitive Threshold Logic (CTL) gates [8].

![[TOC]](PIC/toc.gif)

The CTL circuit technique offers a successfull implementation of threshold functions using conventional CMOS processes. A threshold gate is defined as an m input gate where the output Y is defined as follows:

(3.13)

(3.13)

(3.14)

(3.14)

where X(j) is the j.th binary input with a corresponding weight of W(j). A basic CTL gate of m inputs, as shown in Figure 3.3, comprises a row of weight-implementing capacitors C(i) and a chain of inverters which functions as a voltage comparator.

![[ZOOM]](PIC/mag.gif) Figure 3.3:Basic circuit diagram of a Capacitive Threshold Logic (CTL) gate.

Figure 3.3:Basic circuit diagram of a Capacitive Threshold Logic (CTL) gate.

The CTL gate operates in a two phase non-overlapping clock consisting of a reset phase defined by the clock phi-R and an evaluation phase defined by phi-e. During the reset phase, the row voltage Vrow is reset to the threshold voltage of the first inverter Vth1 . The bottom plates of all input capacitors are forced to ground potential while all the threshold capacitors have their bottom plates connected to VDD. The total charge accumulated in the row during reset can be calculated as follows:

(3.15)

(3.15)

where Vth1 is the inversion (logic) threshold voltage of the first inverter within the comparator and Vth2 is the inversion (logic) threshold voltage of the inverter in the dummy column. In the evaluation phase all binary inputs are forced onto the m input columns while the threshold capacitors are connected to ground potential. The amount of charge in the row, for a given set of inputs, can be calculated as follows:

(3.16)

(3.16)

Since the row charge is retained (charge conservation) during both operational phases, the row voltage perturbation during the evaluation phase becomes:

(3.17)

(3.17)

The row perturbation is equal to the difference between row voltage in reset and evaluation phases.

(3.18)

(3.18)

Substituting this in Equation 3.17 yields

(3.19)

(3.19)

Notice that the first inverter of the comparator circuit is biased exactly at inversion threshold Vth1, which is also the operating point with the highest gain, at the beginning of the evaluation phase. If the voltage perturbation Delta Vrow is positive, the first inverter output will tend to drop to logic "0" and the second inverter will switch to logic "1". If the voltage perturbation Delta Vrow is negative, the opposite will happen.

(3.20)

(3.20)

(3.21)

(3.21)

It can be seen that Equations 3.20 and 3.21 correspond to the basic threshold gate Equations given in 3.13 and 3.14.

![[TOC]](PIC/toc.gif)

The CTL-based parallel counter (i.e. population counter) circuit constitutes the heart of the Taurus architecture [10]. A parallel counter is a multi-input, multi-output combinational logic circuits which determine the number of logic "1"s in their input vectors and generate a binary encoded output vector which corresponds to this number.

A traditional CMOS realization of a parallel counter involves a number of full adders (FA's) arranged in a tree like structure (See Figure 3.4). Swartzlander [13] reports the number of FA's for an m-input population counter as

(3.22)

(3.22)

![[ZOOM]](PIC/mag.gif) Figure 3.4: Full Adder (FA)-based realization of a (31:5) parallel counter.

Figure 3.4: Full Adder (FA)-based realization of a (31:5) parallel counter.

Different threshold logic realizations of the population counter exists in the literature [14,15,16]. The CTL realization of the parallel counter used in this work is based on the two level Minnick counter (Figure 3.5).

![[ZOOM]](PIC/mag.gif) Figure 3.5: Threshold Logic realization of a Minnick Type (31:5) parallel counter .

Figure 3.5: Threshold Logic realization of a Minnick Type (31:5) parallel counter .

![[TOC]](PIC/toc.gif)

The basic architecture of the Taurus comprises of the basic building blocks given in Figure 3.6:

![[ZOOM]](PIC/mag.gif) Figure 3.6: General Architecture of the Taurus image filter chip.

Figure 3.6: General Architecture of the Taurus image filter chip.

Input registers:

At any given time the Taurus architecture needs access

to a 3x3 snapshot of the image. Three rows of shift registers with

a depth of three are used to store this snapshot.

Switch matrix:

Each input bit within the input data has a corresponding

weight. For a logic "1" bit, this weight is applied to the inputs

of the counters. The switch matrix is used to store the filter weights.

Each element within the switch matrix is trigerred by a different

bit of the input image and can activate a programmable number of inputs

of counters.

The CTL counters:

For an 8-bit image, eight independent counters are

used to accumulate the weights of corresponding bits. The switch matrix

activates a number of inputs on each counter depending on the value

of the corresponding bit. The total number of active inputs is accumulated

by the CTL counter blocks.

Systolic adder tree:

The output of the CTL counters corresponds to

a partial sum of one bitplane of the image. All eight partial sums

need to be added to get the result. Since each bitplane has a different

weight, they can not be added directly. The systolic adder tree shifts

(and thereby multiplies) the partial results according to their rank

and accumulates the final result.

This basic architecture has been refined to achieve higher performance.

Figure 3.7 shows the final detailed block diagram of the Taurus architecture with all these improvements and the pipeline registers.

![[ZOOM]](PIC/mag.gif) Figure 3.7: Detailed block diagram of the Taurus architecture.

Figure 3.7: Detailed block diagram of the Taurus architecture.

![[TOC]](PIC/toc.gif)

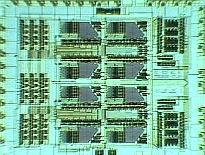

To evaluate the performance of the proposed architecture a test chip has been designed and developed (See Figure 3.8) using conventional 1.2um double-poly double-metal CMOS technology. The chip contains a fixed weight version of the processing core which realizes a (3 x 3) smoothing function. The switch matrix array, which is used to facilitate coefficient programming, has been omitted to simplify testing. The realized test structure contains three eight-bit-wide and three-bit-deep input shift registers which facilitate simultaneous input of three eight-bit pixels during each cycle, the corresponding bitplane multiplexers, eight CTL-based parallel (30,5) counters, and two systolic adder arrays. edge triggered dynamic CMOS register cells were used for all pipeline registers. the parallel counters and the adder arrays each form separate pipeline stages in order to reduce the stage delays (These blocks can be seen in Figure 3.7 as shaded blocks).

![[ZOOM]](PIC/mag.gif) Figure 3.8: Microphotograph of the fabricated Taurus core test chip.

Figure 3.8: Microphotograph of the fabricated Taurus core test chip.

Extensive post-layout simulations have shown that the signal propagation delay associated with the CTL based parallel counters is about 10 ns, whereas the overall delay of the CMOS adder array stage is well below 10 ns, using a supply voltage of 5 V. Thus, the maximum clock frequency can be as high as 100 MHz, allowing real-time processing of high-resolution (1024 x 1024) pixel images at a rate of 50 frames per second.

The core area of the implemented test chip 1.8 mm x 1.5 mm compares very favourably with other image filters using a similar fabrication technology. About 60% of the active area of the chip is occupied by the CTL counters and their input and output registers, while the systolic adder arrays occupy around 15% of the total area.

Although tests were hampered by different reasons, the calculation time for a single vector was measured to be around 7.9 ns [7] which is well under the designed 10 ns.

![[TOC]](PIC/toc.gif)

A number of problems both in the design and the implementation of the algorithm have been detected after carefull analysis of the Taurus design:

A number of solutions to address the above mentioned problems have been evaluated and as a result of this research Aries was developed. In Aries the basic idea of computing a convolution without using conventional multiplier cells, in breaking up the input (image) data to bitplanes remained the same, but RAM blocks were used to calculate bitplane results instead of CTL blocks.

![[TOC]](PIC/toc.gif)

Two main problems in the design of Taurus became primary considerations in the design of Aries: scalability and programmable coefficients with a higher dynamic range.

Although many applications could make use of a 3x3 image filter, a scalable building block is much more desirable than a block with a fixed kernel size. Any kernel with size M x N can be realized by accumulating the results of a number of kernels with smaller sizes m x n where M >= m and N >= n. It can be shown that the number of such m x n kernels needed is:

(4.1)

(4.1)

Scaling of the filter kernel along the Y axis does not impose extra restrictions on the design but in order to scale along the X axis, the block must be able to provide the outputs of the internal shift registers to the next stage. If this is not the case, the same delay must be provided externally to the block. This would result in a non uniform structure as the first block would need no delay elements, the second would need m delay elements, the third 2m and so on. The last problem rises in the addition of all the block results. As the complexity of the addition increases with the number of blocks included, it is not convenient to leave this task out of the block. The block needs to provide some sort of support for the addition of a number of results.

Integer coefficients of up to 15 might be reasonable (though more would be desirable) for a 3x3 filter. But filters with a larger kernel usually need a higher coefficient range. A possible solution is to scale all coefficients of one block with a fixed number, thus changing the range of the coefficients locally. This solution might be applicable for a number of applications but is not sufficient enough for a complete solution.

Increasing the coefficient range within the Taurus architecture is only possible by adding more parallel counter blocks. The increase in the number of counters would also complicate the internal routing to an almost unmanageable level, while further complicating the later adder stages. An algorithmic improvement is inevitable for a significantly higher coefficient dynamic range.

Aries is designed to be a building block for convolutional filters and the main design issue is on scalability. Therefore the design has to:

As scaling in the Y dimension did not have any extra complexity penalty a one dimensional structure was used for Aries. Almost all filters have odd sized dimensions and therefore an even sized block would not be very practical. 3x1 proved to be too small to justify the overhead involved in the architecture and blocks larger than 5x1 would have too much redundancy in them for small to medium sized filters (which usually are 3x3 or 5x5 . As a result, Aries was designed to provide the functionality of a 5x1 one dimensional convolution filter that could be expanded to realize 1D and 2D convolutional filters of any complexity.

![[TOC]](PIC/toc.gif)

![[ZOOM]](PIC/mag.gif) Figure 4.1: General block diagram of the Aries architecture.

Figure 4.1: General block diagram of the Aries architecture.

The basic DSP algorithms implemented by the Taurus and Aries architectures are very similar. The general block diagram of the Aries architecture is shown in figure 4.1. Aries has a 5 level shift register on its input (as opposed to 3 in Taurus) to have simultaneous access to all the data elements. The main difference between the two designs lies in the calculation of the conditional sums of the coefficients on a bit plane. The CTL-based parallel counters in the Taurus architecture are replaced by RAM blocks in the Aries architecture . Once the problem of calculating the result

(4.2)

(4.2)

has been reduced to adding the conditional sums of all bitplanes:,

(4.3)

(4.3)

it was shown that the inner sum could be calculated by simply adding or neglecting the associated coefficient c(i) depending on the value of b(m). As the block only needs five values of the input source, this sum is a linear combination 5 coefficients. Different algorithms can be devised to calculate the sum of coefficients of any length, but as long as the coefficients do not change there will be only 2^5=32 different results depending on the 5-bit value of the input pattern. If a 32-word memory (note that in the case of fixed coefficients, a simple ROM can be used for this purpose) is used to store all the possible results, this sum can be calculated independently of the coefficient representation. To provide programmability a RAM structure was used in Aries. As the delay of reading data from RAM is independent of the word size any length of coefficients can be used. Table 4.1 lists all possible conditional sums depending on the input data.

Table 4.1:All possible conditional sums as a function of input data.

Data Sum Data Sum ----------------------------------------------------- 00000 0 10000 c5 00001 c1 10001 c5+c1 00010 c2 10010 c5+c2 00011 c2+c1 10011 c5+c2+c1 00100 c3 10100 c5+c3 00101 c3+c1 10101 c5+c3+c1 00110 c3+c2 10110 c5+c3+c2 00111 c3+c2+c1 10111 c5+c3+c2+c1 01000 c4 11000 c5+c4 01001 c4+c1 11001 c5+c4+c1 01010 c4+c2 11010 c5+c4+c2 01011 c4+c2+c1 11011 c5+c4+c2+c1 01100 c4+c3 11100 c5+c4+c3 01101 c4+c3+c1 11101 c5+c4+c3+c1 01110 c4+c3+c2 11110 c5+c4+c3+c2 01111 c4+c3+c2+c1 11111 c5+c4+c3+c2+c1

Aries also uses the time multiplexing scheme to operate only on 4 bit planes at any given time. As each bitplane may have different data bits to be processed simultaneously, four different RAM structures have to be used. But since all of the RAM's would hold exactly the same information, it is not necessary to have four completely different RAM blocks. Figure 4.2 shows three existing alternatives:

![[ZOOM]](PIC/mag.gif) Figure 4.2:Three alternative designs for the RAM blocks which are to be used

to store the conditional sums.

Figure 4.2:Three alternative designs for the RAM blocks which are to be used

to store the conditional sums.

Of the different RAM realizations, the Static RAM (SRAM) realization was used in the design of the Aries architecture, because it is easier to design and more robust than the other alternatives. The double dual-port alternative was used in the design, since the basic cell for a dual-port RAM cell was not much more complex than the single-port RAM cell, keeping the overall size of the RAM block compact. The quad-port RAM would have benefited from maximum resource sharing; but the basic cell proved to be too complex, as the extra routing overhead nearly cancels out all the area gained by the single RAM array. The dual port architecture had another advantage: As the RAM is laid out in a column, a totally symmetric structure could be used for both ends of the column (Although this advantage was later given up in favour of shorter signal routing paths).

The output of the RAM's is later shifted and added in a systolic adder that is similar to the one used in Taurus. There is an interesting speed/accuracy trade off parameter that can be adjusted within these two blocks. As mentioned earlier, the speed of RAM is independent of the word width, whereas the speed of the systolic adder heavily depends on the word width of its operands. As both of these blocks are in different pipeline stages, they can be optimized to match each other, minimizing the pipeline redundancy.

The results from the upper and lower parts of the blocks are merged in a separate accumulating adder which again has the same structure as the accumulator that was used in Taurus. The result of this operation is actually the result of the operation. This value will correspond to the new value of data, but it has to be reformatted to the same range as the input: an 8-bit unsigned integer value. This involves some sort of truncation and rounding which heavily depends on the coefficients and the input data ranges.

At this point the data is ready for output. The block includes an additional adder stage that can be configured to be part of an adder chain to add the results of all blocks in the filter.

![[ZOOM]](PIC/mag.gif) Figure 4.3: General floorplan of the Aries DSP engine.

Figure 4.3: General floorplan of the Aries DSP engine.

Figure 4.3 shows the basic floorplan of the Aries DSP engine. The dashed line in the figure shows the flow of data within the blocks. Rough pre-design estimations suggested the size of the final layout to be between 2mm^2 and 4mm^2 using the 0.8um double-metal CMOS technology of the Austria Micro Systems (AMS). The extremely compact size and the high-flexibility of the Aries architecture makes it possible to construct even the most demanding and complex image filters within a single chip.

Aries consists of several independent blocks (see Figure 4.1):

![[TOC]](PIC/toc.gif)

The Aries architecture computes the weighted sum of five consecutive signal values. A simple structure consisting of five shift registers is used to store these consecutive data. The 8-bit input data is sampled at the rising edge of the first clock cycle by the first register. The output of the first register is connected to a second one, and at the second rising edge the data is sampled by the second stage registers while at the same time the first stage registers sample a new data. After the first five clock cycles, the block has access to five consecutive data values and can calculate a weighted sum for these values. At each new clock cycle a new value enters the shift registers and the last value is pushed out of the block.

The Aries architecture is designed as a pipeline structure to speed-up the operation. The signal flow is broken down into several sub-blocks separated by registers. At each clock cycle, the output of each block is sampled by a pipeline register. At the same time the result of the previous block is sampled by another register block and is made available as an input to the first block. Each block has a fixed time determined by the clock rate of the pipeline registers to compute the result.

Instead of calculating all results for 8-bits in n clock cycles, the architecture consists of inner blocks that operate on 4-bit data and produce a new result in n/2 clock cycles. The outputs of the shift registers have a simple multiplexer structure that selects the lower order 4-bits while the clock signal is high and selects the higher order 4-bits when the clock signal is low. These 4-bit partial-data is processed with a faster clock of 2f frequency within the inner blocks and the partial results are joined in the accumulator block. The general pipeline structure of the Aries architecture is shown in Figure 4.4.

![[ZOOM]](PIC/mag.gif) Figure 4.4: Timing diagram of the pipeline.

Figure 4.4: Timing diagram of the pipeline.

The design of the pipeline registers is a very important phase of any pipeline based design. Generally, a large number of registers have to be used. As an example, Aries uses 170 pipeline registers throughout the design. Table 4.2 lists the distribution of registers for various blocks. As mentioned earlier, each block is allotted a certain time to finish the calculations before the output is sampled by the output register. For extremely fast pipeline stages the sampling speed of the register itself is a critical factor. In Aries the inner blocks (which operate on a faster clock) are designed to operate in a 10 ns pipeline. This 10 ns includes the calculation delay of the block plus the sampling time of the register. Therefore, it is highly desirable to have a register that is extremely fast (<1 ns).

Table 4.2:Number of registers used throughout Aries.

Name Number Clock Input Shift Registers 5*8 = 40 f RAM outputs 4*10 = 40 2f Sys. Adder outputs 13 2f Accumulator 13 f' Accumulator Outputs 16 f Output Stage 16 f Final Adder 2*16 = 32 f ---------------------------------------------- TOTAL 170

Two basic circuits are widely used to realize pipeline registers. The most common solution is to use a latch. A simple and efficient latch can be realized with as few as 4 transistors. The main problem is that the circuit requires two complementary clocks. The second alternative is to use a D-type flip-flop (DFF) which is edge trigerred (contrary to the latch which is level sensitive). DFF's are usually preferred for their relative robustness over simple latches in many applications, even though the circuit complexity of a typical DFF is usually twice of that of a simple latch, due to the Master-Slave configuration. All registers within Aries are realized as modified Yuan-Svensson D-type flip-flop [18], whose circuit schematic is given in Figure 4.5.

![[ZOOM]](PIC/mag.gif) Figure 4.5: Schematic of the Yuan-Svensson D-type flip-flop.

Figure 4.5: Schematic of the Yuan-Svensson D-type flip-flop.

The Yuan-Svensson Flip-Flop is one of the fastest and most compact D-type flip-flop realization available. The circuit is based on the True Single Phase Clock (TSPC) principle and requires a single data and clock signal for operation. Its high operation speed and low transistor count make it an interesting alternative for traditional latch architectures. Figure 4.6 shows a simulation result of the Yuan-Svensson D-type flip-flop (all nMOS transistors W=2um, and all pMOS transistors W=5.6um. The simulation shows that even without aggressive transistor sizing, the Yuan-Svensson D-type flip-flop is able operate much faster than 1 ns.

![[ZOOM]](PIC/mag.gif) Figure 4.6: Simulation result of the Yuan-Svensson D-type flip-flop.

Figure 4.6: Simulation result of the Yuan-Svensson D-type flip-flop.

The 8-bit outputs of the shift registers are pairwise fed into a multiplexer. This multiplexer selects the lower order 4-bits for the high value of the clock signal and the higher order 4-bits for the low value of the clock. The output of each multiplexer in a shift register block drives a separate address line of the RAM. Combining the outputs of multiplexers in five rows, four different RAM address lines of five bits each aregenerated. The simple 2:1 multiplexer circuit would be sufficient for read only operation. For write operations the address lines of the RAM blocks must be controlled separately. A third mode where the address lines are tied to an external data bus is used to create a simple interface to the outside world. Figure 4.7 shows the block schematic of the input stage.

![[ZOOM]](PIC/mag.gif) Figure 4.7: Block mode schematic of the input registers.

Figure 4.7: Block mode schematic of the input registers.

The shift registers are laid out in two separate blocks in order to drive the address decoders of the Dual-Port RAM structure. Figure 4.8 shows the layout of one of the input register blocks. The layout consists of two separate paths, each having 2 x 5 Yuan-Svensson D-type flip-flops, and each register pair is followed by a three input multiplexer. The external address lines are shared by both paths, and they are located in the middle of the bus seen to the center of the cell. All clock and power signals are routed horizontally over the cells. The layout occupies an area of 320um x 140um.

![[ZOOM]](PIC/mag.gif) Figure 4.8: The layout of the input shift registers.

Figure 4.8: The layout of the input shift registers.

![[TOC]](PIC/toc.gif)

The main algorithm implemented by the Aries architecture is based on the fact that for m inputs, (regardless of the complexity of the data and coefficients involved) there can be only 2^m different results, all of which can be stored within a processing pipeline to reduce computational complexity. This is only possible if:

A number of factors need to be evaluated to see if the above mentioned requirements can be met. It is clear that the efficiency heavily depends on the number m, the data storage method and the width of coefficients as well as width of data involved in the convolution.

Generally speaking, for a convolution over m input cells with a data width of b bits with a set of kernel coefficients to get a result k bits wide, the total amount of required storage can be expressed as

(5.1)

(5.1)

It must be noted that the storage for each bitplane will be identical, resulting in

(5.2)

(5.2)

unique storage cells. These two expressions clearly show that regardless of the storage method a memory based solution is not very efficient for large values of m. Yet for a simple building block, large values of m are not needed. Even values of m are also not very practical as most convolutions used in DSP algorithms need the data point plus an equal number of neighbours, commonly resulting in a matrix of odd dimensions. Table 5.1 compares the amount of memory to the number of multiplications (assuming k=10) for selected values of m.

Table 5.1:Number of operations.

m N-unique 8x8 Multiplications 3 80 3 5 320 5 7 1280 7

In this work, m=5 was found as a more reasonable candidate as it has a relatively small memory requirement which is large enough to justify the peripherals associated with the storage elements. At first sight, the amount of computational complexity that the storage replaces may not seem significant. Multiplication is one of the most demanding and area consuming operations in the digital domain. Aries, as an example, uses m=5 and the storage elements can deliver the result of 5 multiplications in 10 ns, in a technology where a well designed Full Adder has a delay of about 1 ns. The same performance can only be achieved by the utilization of at least two (or more) multiplier blocks. Moreover the delay of the storage elements is independent of the coefficient dynamics, that is to say a result of any bit length will be delivered at the same rate as the 10-bit result. This allows for optimization in the bit-length, by which the delays of all pipeline stages can be carefully balanced to match the speed of storage elements, as increasing word lengths accounts for increasing processing in the subsequent combinational adder stages.

![[TOC]](PIC/toc.gif)

It is a known fact that the flagship of integrated circuits development is the realization Random Access Memory devices. Current technology is often referred in terms of storage elements per chip (such as 1 G-bit technology). Yet much of these advanced technologies are not available for common digital designs and large on-chip memories are not desirable, mainly because of their area requirements.

For small and medium scale storage within the constraints of conventional digital VLSI technology two practical solutions exist:

The most important advantage of the register array, which basically consists of an array of D-type flip-flops, is that the designer can safely use standart cell elements to generate the register array, completely avoiding the time-consuming full custom design effort. Most of the modern synthesis tools support these arrays and many contemporary designs include relatively large amounts of such structures. But the register array design also has several disadvantages:

![[ZOOM]](PIC/mag.gif) Figure 5.1: 2 x 2 SRAM array compared to 4 x 1 DFF array.

Figure 5.1: 2 x 2 SRAM array compared to 4 x 1 DFF array.

Figure 5.1 clearly shows the difference in silicon area. Even without any associated connections and peripherals, the full custom realization of a 2x2 Static-RAM ( ~1000um^2) occupies less than one sixth of the area of the registers (~6500um^2).

Although the advantages of using a RAM block is clear, it requires much more design effort than the standart cell based solution. Some semiconductor foundries have tools to automatically generate RAM blocks, while some others have services for commercial products (in which practically the foundry employs a RAM design engineer). In the case of Aries, the chosen semiconductor foundry Austria Micro Systems (AMS) did not have a RAM generator, so the RAM cells had to be designed in full custom.

Although inherently Dynamic RAM (DRAM) circuits have a much higher density, their design is much more complicated (state of the art DRAM designs rely on a broad range of technology enhancements which are not common in normal digital CMOS technologies), requires more sophisticated clocking and is usually slower than Static RAM (SRAM) circuits.

![[TOC]](PIC/toc.gif)

![[ZOOM]](PIC/mag.gif) Figure 5.2:General SRAM structure.

Figure 5.2:General SRAM structure.

A generic SRAM carray and its peripheral circuitry are shown in Figure 5.2. The SRAM array consists of a dense two-dimensional arrangement of the actual storage elements. For small memories it is possible to store one word of data in a row (for larger memories one row holds several words of data). All cells in one column share the same input signals (called the bitlines). Prior to read or write operations, the bitlines are charged to a known value by the pre-charge circuits. The row decoders are used to select one row in the array. The storage elements in the row are connected to the common bitlines and either the value within the cell is sensed (read) by sense-amplifiers or is overwritten by write circuits depending on the mode of operation. For large memories, an additional column decoder is used to select the desired word within a row.

![[TOC]](PIC/toc.gif)

A simple CMOS-SRAM cell consists of two cross coupled inverters and two access transistors connecting these two inverters to complementary bitlines.

![[ZOOM]](PIC/mag.gif) Figure 5.3: Basic SRAM cell.

Figure 5.3: Basic SRAM cell.

The two switches in 5.3 are simple nMOS pass-transistors. A so called wordline controls these pass-transistors. As long as the pass transistors are turned off, the cell retainsone of its two possible steady state.

A read operation from this cell is performed by pre-charging the bitlines to a known value (e.g. VDD) and enabling the wordline. As during any read operation only one row can be active (the row decoder guarantees this), each column (bitline) can be modelled by a capacitor representing all the parasitic capacitance of the bitline and the input capacitance of all the access transistors. Figure 5.4 shows the pre-charge circuitry and the simple SRAM cell itself, together with the column capacitances.

![[ZOOM]](PIC/mag.gif) Figure 5.4: Equivalent SRAM cell during Read and Write operations.

Figure 5.4: Equivalent SRAM cell during Read and Write operations.

Depending on the content (i.e., the state) of the cell, one of the bitlines will be pulled down by the nMOS transistor of the inverter with the logic "0" state, while the other bitline will remain at VDD.

Figure 5.5 shows the simulation results of two consecutive read operations. The nMOS transistors of the simulated RAM cell have dimensions of W=3um and L=0.8um while the pMOS transistors and the access transistors are minimum width transistors, having dimensions of W=2um and L=0.8um. The parasitic bitline capacitance is modeled to be 1pF. The first Read Cycle starts at 160 ns with the activation of the wordline. The stored information is a logic "1", bitline rises to VDD while bitline' is pulled down by the second inverter. Notice that the bitline is not pulled down completely (in fact it only reaches 4 Volts) but that is enough for the sense amplifier to operate correctly. The second read operation starts at 180 ns with the activation of another wordline (not shown, which in fact is just the inverted first wordline). This time the stored information is a logic "0" and the switching of the bitlines can be seen clearly.

![[ZOOM]](PIC/mag.gif) Figure 5.5: Simulated SRAM read operation.

Figure 5.5: Simulated SRAM read operation.

A write operation is pretty similar in nature to the read operation. Again the cell is accessed with enabling the wordline, but this time the bitlines are driven to a known state by the write circuitry. This write circuitry is designed to have a stronger current driving capability than the pre-charge and storage cell circuitry, and as a result the bitlines are driven beyond the inversion thresholds of the inverters within the SRAM cell.

![[ZOOM]](PIC/mag.gif) Figure 5.6: CMOS SRAM Write operation.

Figure 5.6: CMOS SRAM Write operation.

The plot in Figure 5.6 is a snapshot of a simulation used to verify the functionality of the RAM design. The first three strips are the control signals. Write enable signal activates the write mode, Wordline selects the rows to be accessed and the Data is the value to be written to the selected cell. The voltages of the bitplanes is plotted next followed by the voltages within the SRAM cell. (the inputs of the two inverters). The simulation snapshot displays consecutive write and read cycles. The first write cycle starts at 220 ns. A logic "0" is written to a cell within the column which is not the cell that we are concentrating. At 240 ns. a logic "1" is written into the cell. The Wordline goes high indicating a write to the cell we are observing. Notice the bitline swing for the write operation. Also the last two strips clearly show the switching inverters. A new value has been written. There is a read cycle starting at 280 ns. At 300 ns our cell is accessed for a read operation. Notice the relatively low bitline swing and the perturbation on the inverter. It is clear that a perturbation as high as the threshold voltage of the nMOS transistor may cause the inverter switch state and destroy the content of the cell. Kang and Leblebici [19] give a conservative analytical expression for the sizing of access and pass transistors to prevent overwrite during read.

(5.3)

(5.3)

Substituting the values for the AMS 0.8um process VDD=5V and Vt,n=0.75V we get.

(5.4)

(5.4)

The simulation shows a second write operation starting at 340 ns. At 360 ns logic "1" is written to the cell activated by the Wordline .

![[TOC]](PIC/toc.gif)

To achieve a higher RAM density, Aries uses dual port RAM cells. As each port must be able to access the cell independent of each other the basic access lines Bitline, Bitline' and Wordline need to be duplicated. Two more pass transistors are added to control access of the second port (See Figure 5.7).

![[ZOOM]](PIC/mag.gif) Figure 5.7: Dual-Port CMOS SRAM cell schematic.

Figure 5.7: Dual-Port CMOS SRAM cell schematic.

An efficient layout for the basic cell is the key point of any dense RAM circuit layout. There are a few important factors to consider when designing a custom cell like this:

![[ZOOM]](PIC/mag.gif) Figure 5.8: Layout of the optimized Dual-Port CMOS SRAM cell.

Figure 5.8: Layout of the optimized Dual-Port CMOS SRAM cell.

Figure 5.8 gives the basic Dual-Port CMOS SRAM cell used within Aries. The basic cell measures 30.2um x 19.2um.

![[TOC]](PIC/toc.gif)

A very simple pre-charge circuit is used for the realization of the RAM. Unlike many SRAM designs, the SRAM in Aries does not use clocked pre-charge circuits. The RAM is targeted to operate at clock speeds of up to 100 MHz. A clocked pre-charge circuit would have needed a very accurate and complicated clock timing. The schematic of the static pre-charge circuit structure can be seen in Figure 5.9

![[ZOOM]](PIC/mag.gif) Figure 5.9: Pre-charge circuit schematic.

Figure 5.9: Pre-charge circuit schematic.

The pMOS transistors driving the bitlines have width of 6um , all the other transistors have dimensions of 2um. While providing a very simple column pre-charge mechanism, this structure also has some drawbacks:

The most important is that as there is a constant pre-charge voltage in the bitline, any effort to pull the bitline down must fight against this pre-charge circuit. One possible remedy would be to use minimum-size pMOS transistors in the pre-charge, but this is not feasible as a fast recovery is required to pull the bitlines from their low states to their high states. A weak pull-up would result in a slower response time in read access. On the other hand, the write operation needs to pull down the bitline even further, in order to be able to switch the internal inverters. A relatively strong write circuit easily accomplishes this task. This in turn has another negative effect. After any write operation one of the bitlines is pulled down to a relatively low voltage level. Thus, a subsequent read operation may under certain circumstances overwrite the content of the cell. Fortunately, this is not a major issue, since write operations are only required to update the weights; therefore, the write timing is not critical. In Aries, a write operation must be followed by an idle state (to help recover the bitlines) prior to a read operation. This restriction does hardly effect the operation of the circuit.

![[TOC]](PIC/toc.gif)

The main issue in the RAM design for Aries was the speed of read operation. The sense amplifier is the most important block that dictates the speed of read operations. The sense amplifier was developed by B. Aksoy [20]. A number of different architectures were evaluated. Finally, a two stage amplifier with a cross-coupled pMOS amplifier as the first stage and a conventional differential amplifier as the second stage was found to give the best performance. Figure 5.10 shows the schematic and the transistor sizing of the sense-amplifier circuit. Detailed evaluation and simulation results of this two-stage sense amplifier can be found in [20].

![[ZOOM]](PIC/mag.gif) Figure 5.10: Schematic of the Sense Amplifier.

Figure 5.10: Schematic of the Sense Amplifier.

The write circuit is a simple differential stage that is driven to saturation by Data and Data'. Two pass transistors and the current source for the differential amplifier is controlled by the Write signal. Figure 5.11 shows the schematic of the write circuit together with the sizing of non minimal transistors.

![[ZOOM]](PIC/mag.gif) Figure 5.11: Schematic of the Write circuitry.

Figure 5.11: Schematic of the Write circuitry.

![[TOC]](PIC/toc.gif)

A typical RAM circuit consists of an array of RAM cells that are arranged in rows and columns. The RAM can be laid out so that each row holds exactly one word of data, which for most RAM designs would generate blocks with dramatic aspect ratios that are totally unacceptable for efficient design both for their awkward geometry and the parasitics associated with especially the very long bitlines, not to mention the complicated decoder structure. For these reasons, RAM's are designed to contain more than one words in a row, with a two stage decoding process: First the row decoder selects the row of interest and than column decoders chose the required word from the selected row. Aries however required, only 32 different values for 8-16 bit data words (the exact word length was not chosen to be 10 at the beginning of the design). It was therefore possible to make an array without column decoders. The RAM array used, has an aspect ratio of 2:1 which is totally acceptable.

![[ZOOM]](PIC/mag.gif) Figure 5.12: Row decoder schematic.

Figure 5.12: Row decoder schematic.

Five address lines are necessary to decode 32 individual rows. For the design of Aries a two stage decoding structure was used. Figure 5.12 illustrates the decoding circuitry. The box on the top is the first stage decoder (pre-decoder). Please note that the block in the figure is not logic optimized and contains a number of redundant blocks. The block diagram represents the actual logic elements realized on the layout (the AND gate as an example is realized by a combination of NAND and NOT). The local inverters on the eight blocks are used to generate the complementary signals where needed and thereby reduce the number of vertical wires in the decoder.

![[TOC]](PIC/toc.gif)

There are two problems associated with writing to the RAM blocks:

Both of these problems can be addressed with relatively low design effort. For the first problem a very simple state machine as shown in Figure 5.13 can be used to generate the idle times. The circuit in the Figure 5.13 generates synchronous read and write signals depending on the state of "en".

![[ZOOM]](PIC/mag.gif) Figure 5.13: Read-Write state machine.

Figure 5.13: Read-Write state machine.

Aries uses a 2:1 multiplexer to achieve time domain multiplexing. A 3:1 multiplexer can be used to account for the write state where the address lines are supplied externally. As an alternative, a simple counter could be added in to the design to generate the addresses during a write cycle. These issues are discussed in Section 7.2.1

![[TOC]](PIC/toc.gif)

The layout of a single RAM column has evolved considerably during the design process. Figure 5.14 compares the initial floorplan to the final floorplan.

![[ZOOM]](PIC/mag.gif) Figure 5.14: Floorplan of the RAM column.

Figure 5.14: Floorplan of the RAM column.

The initial floorplan tries to take advantage of the symmetric nature of the dual port architecture in which sense amplifiers and the pre-charge circuit of the other port is placed on the ends of the blocks. The first row of the RAM will always contain the value 0. A special cell consisting of this "zero-RAM Cell" and the write circuit was designed. This cell also switched the order of the bitlines which enabled using the same pre-charge and amplifier circuits. Although the idea is appealing at first sight, it creates a few additional problems:

The final floorplan has the write circuitry on one end of the block while two read ports are on the same side. This minimizes the routing for the next adder stages. The routing of the outputs requires ten Metal-2 busses to be placed after the sense amplifier block (as there are ten outputs). It was possible to place the pipeline registers underneath these busses, which saved a significant amount of silicon area.

![[ZOOM]](PIC/mag.gif) Figure 5.15: Floorplan layout of the complete Dual-Port SRAM. Detailed layout

of the circled area is shown in Figure 5.16.

Figure 5.15: Floorplan layout of the complete Dual-Port SRAM. Detailed layout

of the circled area is shown in Figure 5.16.

Figure 5.15 shows the complete layout. A close-up (marked with the circle on the complete layout) can be seen in Figure 5.16. The closeup shows parts of the first stage and second stage row decoders, the RAM cell array, the pre-charge circuit, the sense amplifier as well as the pipeline registers underneath the output bus. (the Metal-2 lines are drawn only as outlines as otherwise they would obstruct the view to the cells completely).

The layout of a single RAM block consisting of two separate address decoders, 32 rows of 10 Dual port SRAM cells, all the associated sense amplifier, write, precharge circuitry and the pipeline registers occupies an area of 390um x 815um (0.32mm^2). With these dimensions the RAM block is by far the most area consuming of all the Aries blocks. This was already predicted in the pre-design floorplan shown in Figure 4.3.

![[ZOOM]](PIC/mag.gif) Figure 5.16: Close-up of the Dual-Port SRAM layout (area highlighted in

Figure 5.15).

Figure 5.16: Close-up of the Dual-Port SRAM layout (area highlighted in

Figure 5.15).

![[TOC]](PIC/toc.gif)

A systolic structure is made up of an array of identical basic processing elements with same type of (similar) connections. The name systolic adder is actually misleading for this particular adder used within Aries. The name comes from the earlier adder design used within the Taurus architecture, where the partial results of all eight bitplanes were shifted and added in a single structure consisting of eight adder rows of five adders each (See Figure 3.6). In the present Aries architecture, the systolic adder is required to shift-add four numbers in each cycle, as a result of time multiplexing. Yet we have retained the name of this block, since the essence of the operation has not changed. This section will give an architectural overview of the designed adder. A detailed analysis discussing the design methodology will be presented in Chapter 8.

The algorithm in Aries requires that four numbers corresponding to the partial sums of bitplanes be added in one cycle. This operation can be summarized as:

(6.1)

(6.1)

The multiplication with a power of two represents a shift-left operation. Symbolically this can be expressed like in Figure 6.1.

![[ZOOM]](PIC/mag.gif) Figure 6.1: Symbolical representation of the shift-add operation used in Aries.

Figure 6.1: Symbolical representation of the shift-add operation used in Aries.

As no general building blocks for the addition of four binary numbers exist readily, this operation must be broken down into a simpler form so that it can be calculated with the basic CMOS building blocks. For the addition of more than two binary numbers (multi operarand addition), two different structures are commonly used [5]:

![[ZOOM]](PIC/mag.gif) Figure 6.2: Multi-operand adder array with carry propagate adders.

Figure 6.2: Multi-operand adder array with carry propagate adders.

Figure 6.2 shows the basic structure of a multi operand adder array which is constructed with carry propagate adders. This is called carry propagate because the carry from each adder is propagated directly to its neighbour. Only the sums of the adders within a row are passed to the next stage. The carries of all stages are evaluated with additional adders. The main disadvantage of this is the heavily serial nature of the operation. A worst case vector may cause the final result to be delayed more than m*n adder delays in an m x n adder array. A detailed analysis of the carry-ripple effect that causes this problem will be given in Section 6.1.2.

The carry output bit of a FA is by one order more significant than the sum output bit of the same adder. This is the reason why the carry is propagated in the carry propagate structure. It can be done differently though: In the carry save structure, the carry is saved, it is passed diagonally of the adder that is left to the adder that gets the sum output. This scheme is illustrated in Figure 6.3.

![[ZOOM]](PIC/mag.gif) Figure 6.3: Multi-operand adder array with carry save adders.

Figure 6.3: Multi-operand adder array with carry save adders.

Notice that the last stage in the figure is in fact another carry propagate adder. The carries are saved until the last operation, which leaves two vectors; one consisting of the sum and the other of the carries of the previous operations. Needless to say, these two numbers have to be added. If the simple carry propagate adder is used as the last step the worst case delay will be m+n for an adder array of the dimensions m*n. This can even be enhanced if a faster adder is used as the final adder.

![[TOC]](PIC/toc.gif)

The most basic addition block that is used is the half adder. The half adder has only two 1-bit inputs, A and B, whose Sum can be calculated by a simple XOR operation. The Sum output is sufficient to express the sum of two 1-bit numbers for three out of four possible inputs. The sum of A=1 and B=1 can not be expressed by a single bit and a result with a higher significance than the Sum is required. This result is known as the Carry-out which states that an overflow has occurred.

The 1-bit FA is an enhanced half adder circuit having an additional Carry-in signal, which enables it to be cascaded with other adders and therefore is commonly used as a basic building block for generic adder arrays. The truth table of the FA is given in Table 6.1. (The truth table of the half adder corresponds to the first four entries in the truth table where Carry-in=0)

Table 6.1:Truth table of 1-bit FA.

Carry-In A B Carry-Out Sum

0 0 0 0 0

0 0 1 0 1

0 1 0 0 1

0 1 1 1 0

1 0 0 0 1

1 0 1 1 0

1 1 0 1 0

1 1 1 1 1

As the FA is the most widely used structure in a wide range of computationally complex functions, a significant amount of research effort was made on the realization of efficient adder structures. A quick glance to the truth table clearly reveals a classical XOR behaviour. XOR type functions typically have difficult CMOS realizations. A number of different realizations of FA's have been published [4,21,22,23,24] A well known CMOS FA structure was chosen in Aries since this adder is one of the most compact, robust and fast FA alternatives. The detailed design of a high performance FA cell, based on this adder, will be discussed in Section 8.3.2. The schematic of the adder and the transistor dimensions are shown in Figure 6.4.

![[ZOOM]](PIC/mag.gif) Figure 6.4: Circuit schematic of the CMOS FA.

Figure 6.4: Circuit schematic of the CMOS FA.

Please note that in this implementation, Carry' is used to generate Sum; as a result the Carry signal can be generated before the Sum signal.

![[TOC]](PIC/toc.gif)

A total of n 1-bit FA's can be used to add two n-bit binary numbers (to be more precise n-1 FA's and a half adder, as the first addition would normally not have a Carry-in. This arrangement of FA's, where the Carry-out signal of the FA is connected to the Carry-in signal of the FA that adds the next significant bits, is known as the Ripple Carry Adder. The generic arrangement of the ripple carry adder is given in Figure 6.5.

![[ZOOM]](PIC/mag.gif) Figure 6.5: The Ripple Carry Adder.

Figure 6.5: The Ripple Carry Adder.

Compared to all the known n-bit binary adders, the ripple carry adder is the most compact solution. It requires a small number of operational blocks (1 bit adders) and has a minimum routing overhead, as only the the Carry-out signals have to be connected to the Carry-in signals of the adjacent blocks. In a well designed layout of a FA circuit this connection can be made by simple abutment, which makes the design of the n-bit adder extremely simple.

The main drawback however, is that this realization is also the slowest parallel solution available. Although the adder accepts data in parallel, the operation is inherently serial because the addition of the i.th bit needs the Carry-out of the (i-1)th bit for the correct result. Starting from bit 0, the actual Carry is generated and rippled through all the stages to create the final result, hence the name ripple carry adder. The worst case scenario is when the carry of the first bit causes all the Sum bits to change. The delay for this operation is roughly expressed as n FA delays.

![[ZOOM]](PIC/mag.gif) Figure 6.6: Simulated ripple effect in a 10-bit Ripple Carry Adder.